Multi-Homography Stitching (Paper@GCPR2023)

Parallax-aware Image Stitching

based on Homographic Decomposition

GCPR'2023

Simon Seibt1, Michael Arold1, Bartosz von Rymon Lipinski1, Uwe Wienkopf2 and Marc Erich Latoschik3

2 Institute for Applied Computer Science, Nuremberg Institute of Technology

3 Human-Computer Interaction Group, University of Wuerzburg

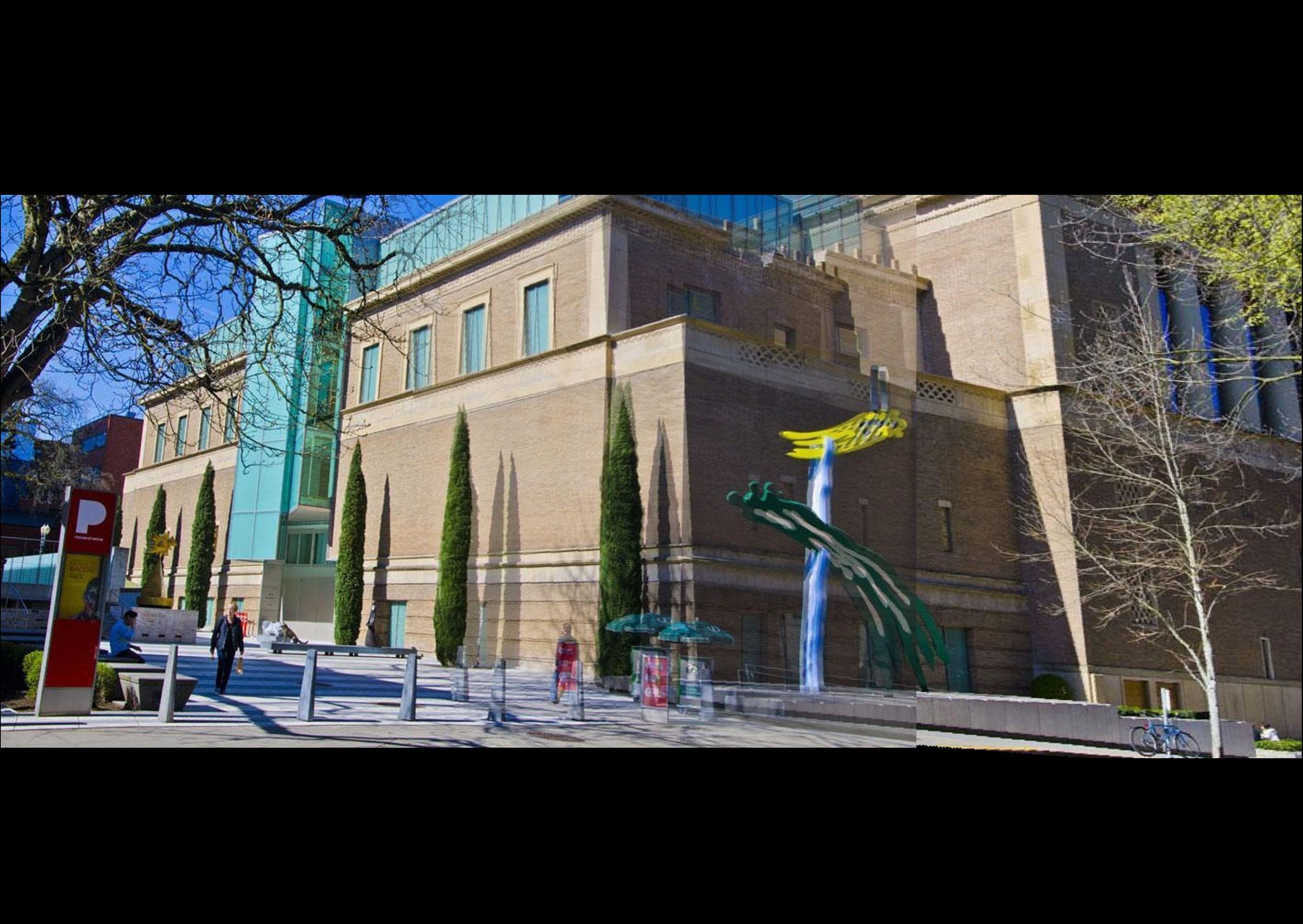

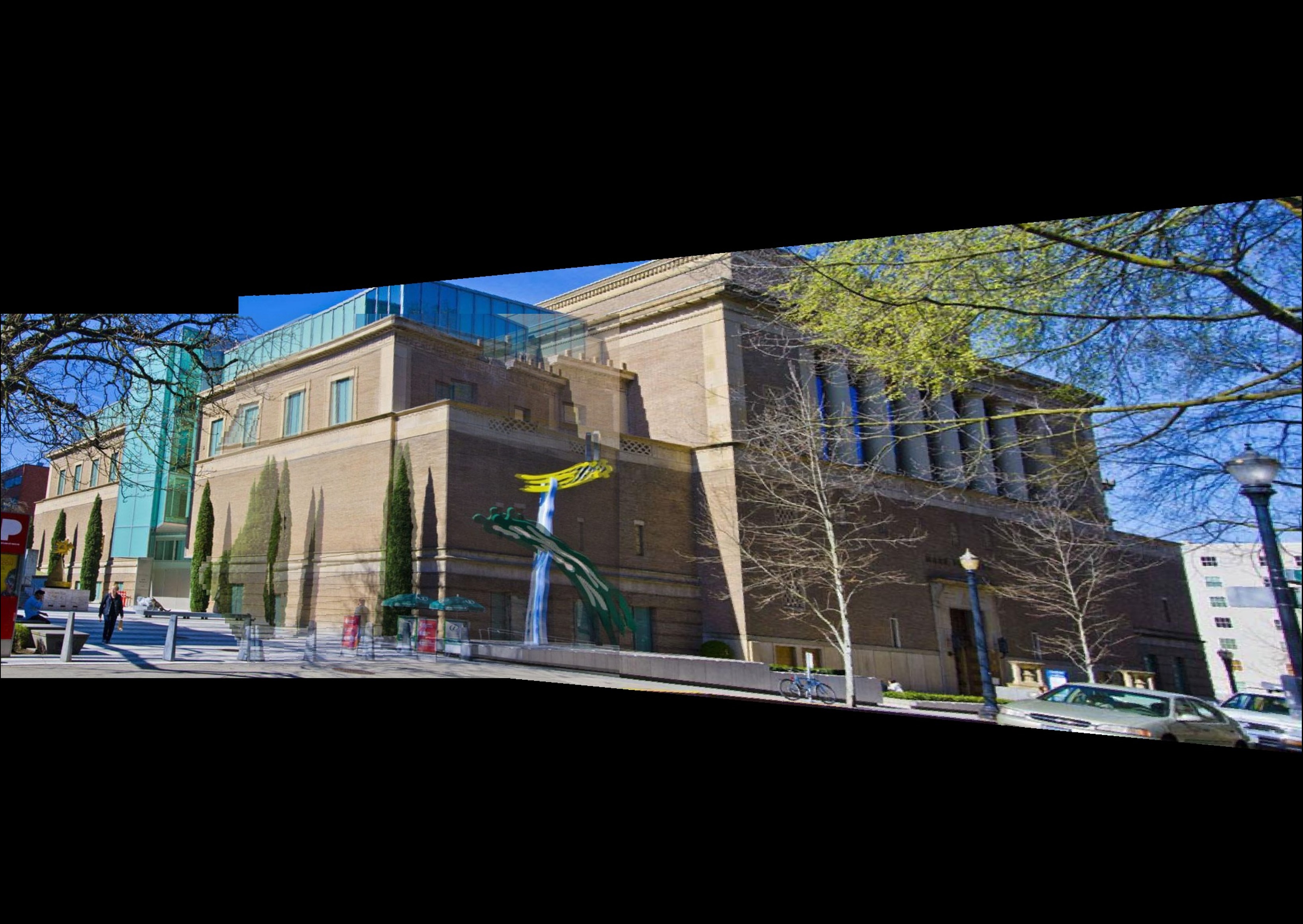

Visual comparison of APAP [1] with our approach (MHS)

Abstract

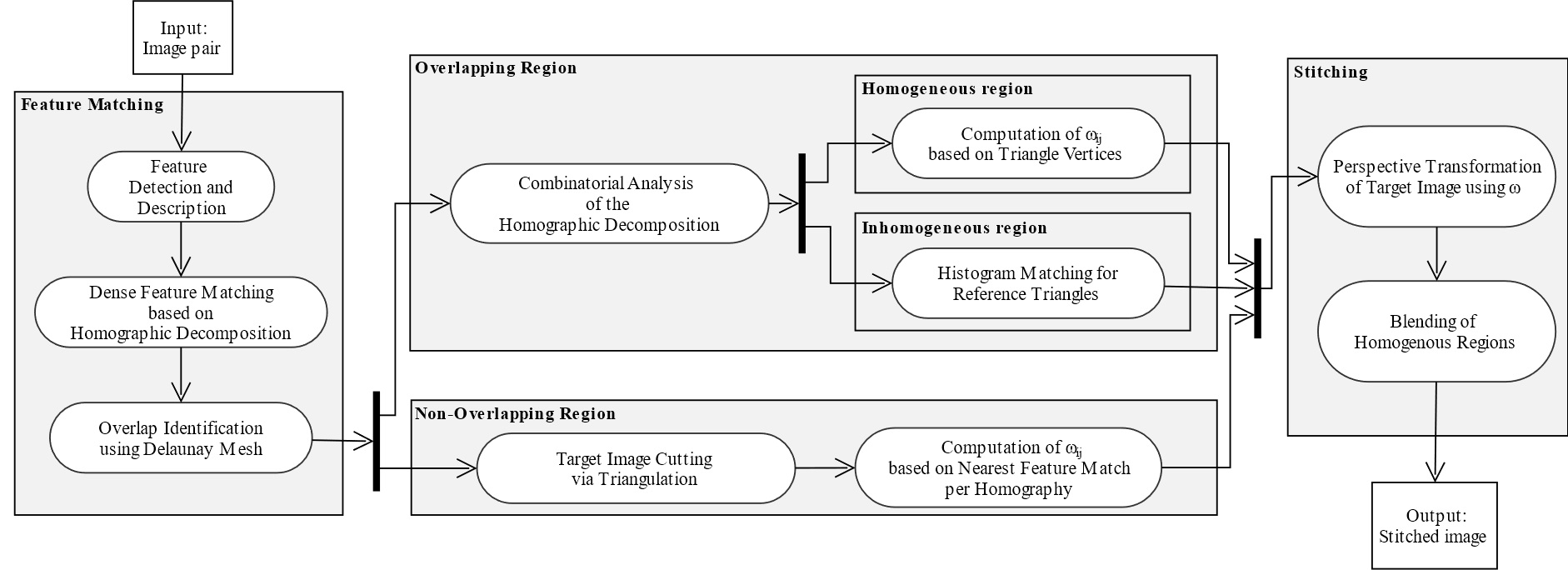

Image stitching plays a crucial role for various computer vision applications, like panoramic photography, video production, medical imaging and satellite imagery. It makes it possible to align two images captured at different views onto a single image with a wider field of view. However, for 3D scenes with high depth complexity and images captured from two different positions, the resulting image pair may exhibit significant parallaxes. Stitching images with multiple or large apparent motion shifts remains a challenging task, and existing methods often fail in such cases. In this paper, a novel image stitching pipeline is introduced, addressing the aforementioned challenge: First, iterative dense feature matching is performed, which results in a multi-homography decomposition. Then, this output is used to compute a per-pixel multidimensional weight map of the estimated homographies for image alignment via weighted warping. Additionally, the homographic image space decomposition is exploited using combinatorial analysis to identify parallaxes, resulting in a parallax-aware overlapping region: Parallax-free overlapping areas only require weighted warping and blending. For parallax areas, these operations are omitted to avoid ghosting artifacts. Instead, histogram- and mask-based color mapping is performed to ensure visual color consistency. The presented experiments demonstrate that the proposed method provides superior results regarding precision and handling of parallaxes.

Paper

Pipeline

sub-activities in white color

Visual Results

Global Homography

APAP [1]

ELA [2]

Proposed (MHS)

Results from Photoshop v25.2

The Photomerge function in Photoshop is based on the seam-cutting method, making a direct comparison of the results unfeasible. Due to the different approach, no ghosting artifacts occur with this method. However, the seam may have been computed incorrectly, causing the images to be stitched incorrectly.

Standard Photomerge

Photomerge with correction of geometric distortion

The scenes “Building” and “Adobe” could not be stitched using Photoshop due to alignment issues in the image pairs. (Possible cause: Building: Insufficient overlap in combination with too many parallaxes. Adobe: Perspectives too different and parallaxes too large.)

BibTeX

@inproceedings{Seibt2023MHS,

author = {Seibt, Simon and Arold, Michael and Von Rymon Lipinski, Bartosz and Latoschik, Marc Erich},

title = {Parallax-aware Image Stitching based on Homographic Decomposition},

booktitle = {Pattern Recognition (Proceedings of DAGM GCPR)},

year = {2023},

}

References

| [1] | Zaragoza, J., Chin, T.J., Brown, M.S., Suter, D.: As-projective-as-possible image stitching with moving dlt. In: IEEE Conference on Computer Vision and Pattern Recognition. pp. 2339–2346 (2013) |

| [2] | Li, J., Wang, Z., Lai, S., Zhai, Y., Zhang, M.: Parallax-tolerant image stitching based onrobust elastic warping. IEEE Transactions on Multimedia pp. 1672–1687 (2018) |

| [3] | Zhang, F., Liu, F.: Parallax-tolerant image stitching. In: IEEE Conference on Computer Vision and Pattern Recognition. pp. 3262–3269 (2014) |

| [4] | Hirschmuller, H., Scharstein, D.: Evaluation of cost functions for stereo matching. In: IEEE Conference on Computer Vision and Pattern Recognition (2007) |

| [5] | Scharstein, D., Pal, C.: Learning conditional random fields for stereo. In: IEEE Conference on Computer Vision and Pattern Recognition (2007) |